Section 1 of 5 · 10 min read

What AI Actually Is

Most arguments about AI in climate are really arguments about which caricature to believe — the miraculous accelerator or the catastrophic distraction. Neither is useful. What's useful is understanding what these systems actually do.

AI today mostly means machine learning

"Artificial intelligence" is not a single technology. It covers a wide range of approaches, but what people mean when they say "AI" today is almost always machine learning — systems that find patterns in data rather than following hand-coded rules. Large language models (LLMs) like Claude, GPT-4, and Gemini are the dominant form right now.

LLMs were made possible by the transformer architecture, introduced in a 2017 paper. What transformers do, at their core, is predict the most plausible next token in a sequence — essentially an extraordinarily sophisticated pattern-completion system trained on most of the text on the internet. That's powerful. And it's also exactly why LLMs fail in specific ways: they can't actually distinguish between what they know and what they're confidently confabulating.

LLMs don't reason. They predict. That distinction isn't pedantic — it's why they produce confident wrong answers, and why verification is always your responsibility, not theirs.

Tool AI vs. Agentic AI — a control distinction that matters

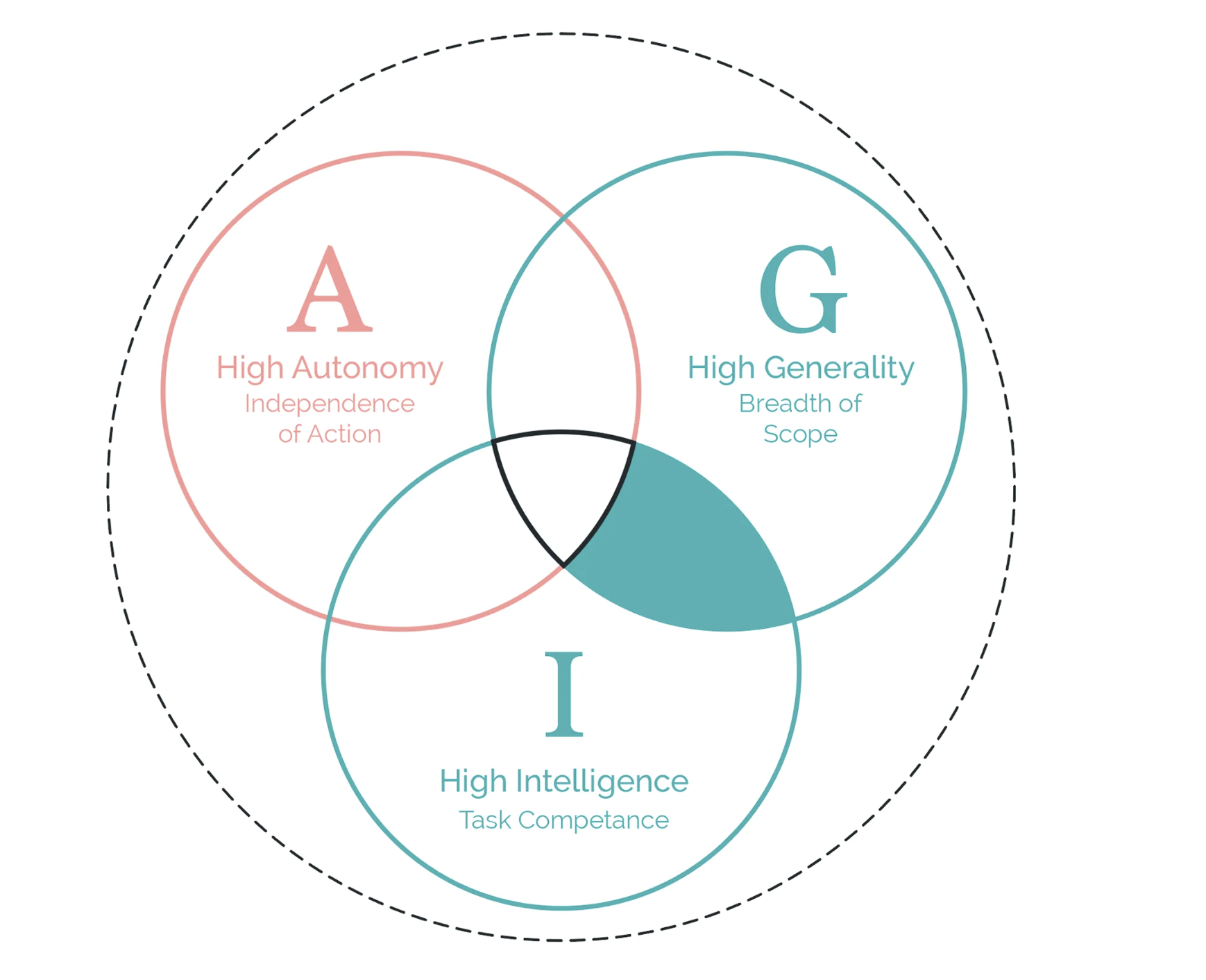

A useful framework breaks AI systems into three dimensions: Autonomy (how independently it acts), Generality (how many domains it can operate in), and Intelligence (how sophisticated its reasoning). The most practically useful distinction for climate workers is between Tool AI and Agentic AI.

Tool AI responds when you prompt it. You ask, it answers. You remain in control of every action. This is what most climate professionals are using today — Claude, ChatGPT, Gemini as a thought partner or drafting assistant.

Agentic AI takes actions in the world without waiting for each instruction — running code, browsing the web, sending emails, spinning up other AI agents. The oversight implications are completely different. When an AI can act autonomously across systems, you need governance structures that were never needed when AI just answered questions.

The AGI framework: three dimensions for understanding any AI system

The general-purpose technology logic

Some technologies are specific — a solar panel converts sunlight to electricity, a cardiac stent keeps arteries open. General-purpose technologies work differently. Electricity didn't care what it powered. The internet didn't choose which businesses would emerge. AI is in this category — it's infrastructure that reshapes whatever domain it's embedded in.

Electricity's history is instructive: it took 40 years for productivity gains to show up measurably in economic statistics, and the gains were not uniformly distributed. The same technology that made modern hospitals possible also powered sweatshops. What determined the outcome wasn't the technology itself — it was the governance decisions and institutional arrangements around it.

Writer Ada Palmer captures this as the "amplifier model": technology amplifies existing systems. It doesn't choose direction. A surveillance-driven business model produces surveillance AI. A climate-equity-centered organization, if it makes deliberate choices, can produce something different.

AI doesn't choose directions. Systems and governance do. This is the operating assumption of everything that follows in this course.

Who controls it is not a neutral fact

Frontier AI development requires billions of dollars of compute, enormous proprietary datasets, and engineering teams drawn from a tiny global talent pool. The result: a handful of companies — OpenAI, Anthropic, Google DeepMind, Meta AI — mostly in the United States, with significant Chinese competition, control the systems the rest of the world uses. This is a structural fact.

Open-source models (Llama, Mistral, and others) change the picture somewhat. They can be audited, fine-tuned, and run without depending on a vendor. But deploying them requires technical capacity that creates its own kind of concentration. Neither approach is neutral.

For climate work specifically, this matters. Climate action is a fundamentally distributed problem — thousands of actors, many in the Global South, needing to coordinate, monitor, and build. Infrastructure controlled by a small number of private companies in two countries is a risk worth taking seriously, not a background assumption to accept.