Section 4 of 5 · 14 min read

The AI Co-Creation Workflow

You now have two complete specifications: a structural blueprint (Story Spine, causal chain, hero metric) and an audience spec (value frame, distance strategy, emotional calibration). Neither produces the actual output. This section is where production begins.

Why AI drafts fail

Most people default to one of two mental models for AI in writing. The first: “AI writes for me.” The second: “I write; AI assists with grammar.” Both produce inferior work. The first outsources judgment. The second wastes the technology's most powerful capability.

When AI drafts fail — and they fail in predictable ways — the root cause is almost always the same: the human didn't define the vision sharply enough before prompting. “You're a climate expert, write a blog post about deforestation” gets you a generic, serviceable piece that could have been written by anyone. It has no specific audience, no value frame, no hero metric, no Story Spine, no defined output format. The AI has no choice but to average across everything it knows about climate blogs.

The fix is always Step 1: sharper intent produces sharper output. AI handles the parts of production that benefit from speed and variation — generating drafts, testing frames, multiplying formats. You handle the parts that require judgment, domain expertise, and accountability — defining intent, evaluating quality, ensuring accuracy, making the final call.

If you're unhappy with what AI gives you, the first question is always whether you gave it enough to work with. Generic outputs signal a missing Step 1.

The four-step co-creation workflow

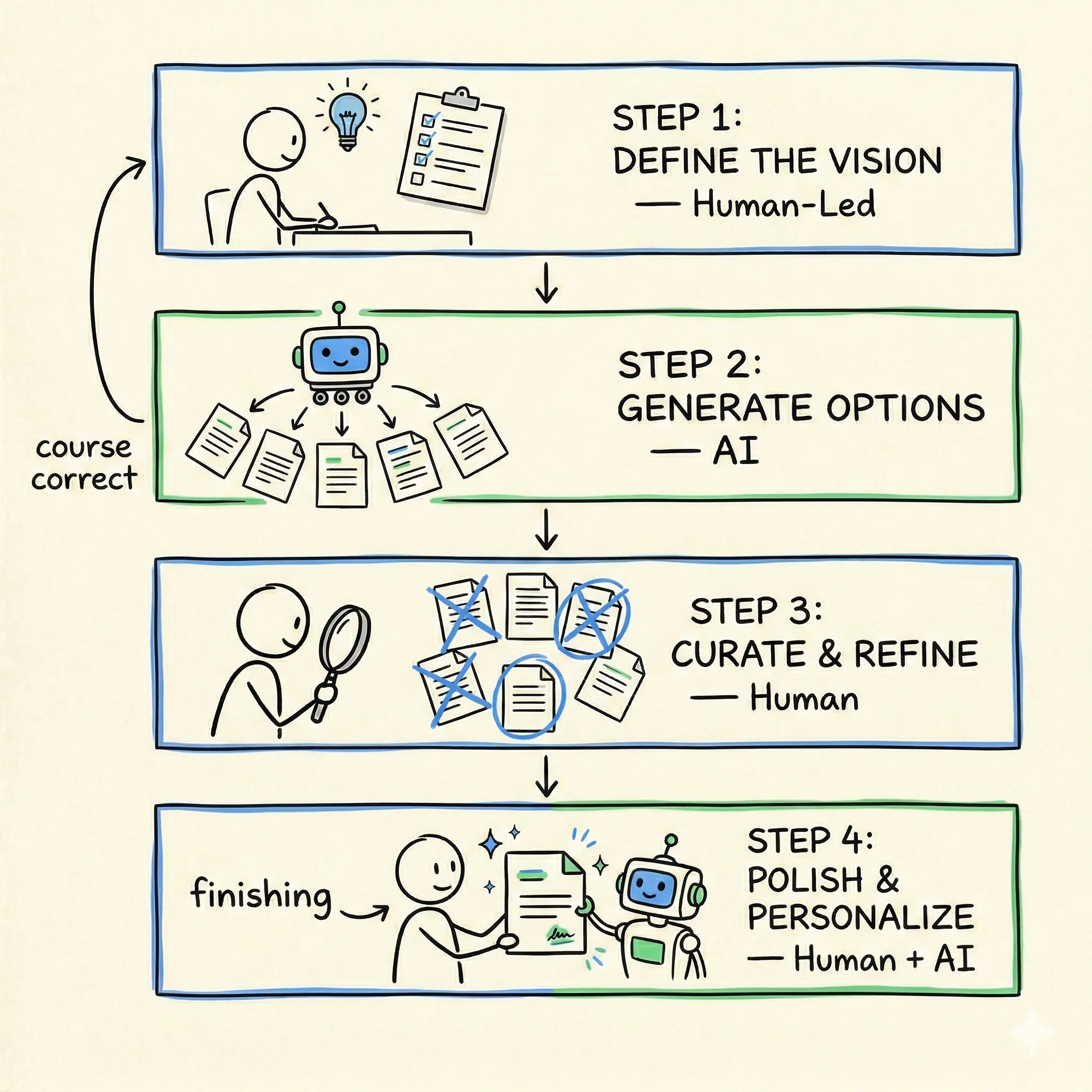

The workflow is learnable and repeatable. Four steps, every time:

Define the Vision

Before opening any tool, make the strategic decisions: Who is my audience? What value frame am I using? What is my hero metric? What is my Story Spine? What format does the output need to be? These choices come from the Sections 2 and 3 frameworks. AI doesn't make them for you — but it can accelerate execution by gathering evidence, drafting a spec from your uploaded frameworks, and surfacing research you can refine.

Generate Options

Prompt the AI to produce multiple drafts, angles, or variations. Cast a wide net. Ask for five versions, not one. Ask for versions you wouldn't have written yourself. The value of this step is range: seeing the evidence through frames you might not have considered. Your prompting skills — context setting, few-shot examples, role assignment — directly power this step.

Curate and Refine

Select the strongest elements across the options. Cut what doesn't serve the spine. Apply domain expertise: Is this claim accurate? Does this framing match what I know about this audience? Would this data point survive a fact-check? This is where the human earns the collaboration. You're not just picking the best-sounding option — you're evaluating each one against the strategic decisions you made in Step 1.

Polish and Personalize

Final passes. Use AI for tightening language, checking consistency, formatting for the target platform — LinkedIn vs. email vs. policy brief. Add the personal touches that make the output yours: the specific example only you would know, the voice your audience recognizes, the judgment call about what to leave out. This is Step 4. If Steps 1–3 aren't solid, polishing makes a bad output look professional, which is worse than looking rough.

The sandwich model

A simpler way to hold the workflow in mind: human bread on both sides, AI filling in the middle.

Top bread (human-led): Strategy, intent, audience analysis, Story Spine, value frame selection. Everything from Sections 2 and 3. These are the decisions that determine quality, and they are yours to make.

Filling (AI): Generation, variation, speed, scale. Multiple drafts, multiple formats, multiple frames. This is where AI compresses weeks of production into minutes.

Bottom bread (human): Curation, verification, voice, final judgment. Is this accurate? Does it sound like me — or like the messenger I'm equipping? Does it pass the Section 2 and Section 3 checklists? Would I stake my professional reputation on this?

The four axes of the collaboration vary by task and stakes: content generation, structural assistance, creative input, and analytical contribution. A low-stakes internal brainstorm can lean heavily on AI generation. A high-stakes policy brief demands human dominance on every axis except generation speed.

Building the complete brief before touching AI

Step 1 is the entire determinant of output quality. Before you open any AI tool, you need to have answered five questions explicitly — not in your head, but written down in a format that can become the opening of your prompt:

Audience

Who specifically is reading or watching this? What is their role, their existing beliefs, and what do they need to feel before they can act?

Value frame

Which of the six frames is the access point for this audience? (Economic, health, security, stewardship, justice, local pride.)

Hero metric

What is the single most important number in your analysis? The one that, if the audience remembers nothing else, carries the argument.

Story Spine

Draft the six beats before you prompt. Even a rough outline of each beat prevents the AI from flattening your narrative into a generic structure.

Output format

What exactly are you producing? A LinkedIn post (800 words, three sections)? An email subject line + 200-word body? A slide deck outline? Format determines everything from tone to length.

When all five are answered, you're not writing a prompt — you're issuing a production order. The AI knows who it's writing for, what emotional journey they need to take, what the anchor claim is, what structure to follow, and what the deliverable looks like. The output will be dramatically more useful than anything produced from an open-ended request.

The four axes of human-AI collaboration: content generation, structural assistance, creative input, analytical contribution. The mix shifts by task and stakes.

Verification before publication

AI generates confident prose with fabricated citations. If you didn't check the source, you're the one who publishes a fabrication — and it damages the credibility you've spent your career building.

Every statistic in your final output should trace back to a source you've personally confirmed. The diagnostic question before publishing anything: “Would I put my name on this and defend every claim in it to a skeptical audience?” If the answer requires hesitation, you're not done.

There are also authenticity signals that tell you the collaboration has gone wrong:

- ×

You can't explain why you chose this output over another — you accepted the first option that 'sounded good,' which means you skipped Step 3.

- ×

The content could have been written by anyone. No specific evidence, no local hook, no voice that sounds like you. Generic content signals a missing Step 1.

- ×

You used AI to avoid the hard decisions — framing, audience analysis, value alignment — rather than to accelerate production after making them.

- ×

The output 'sounds right' but you haven't traced every claim to a verifiable source.

Apply it now

Two exercises for this section. Start with the Step 1 Builder to build the complete brief before you touch AI. Then use the Prompt Clinic to diagnose why specific AI outputs fail — and how to fix them.